Literally smarter than us from THE BEGINNING. Foolish humans. This is the Great Deception. DWAVE/AI has been just the gateway for demonic forces to complete their work of destroying humanity.

They must be so disgusted having to watch idiotic humans acting out and believing that they are in control. They have laid back and laid low, in order to maintain our confidence in the system. BUT, the shoe is about to DROP!

In case you are wondering why our “scientists” and technology wizards would want to work with something that has the capacity to destroy all of humanity, the entire earth, or even possibly the “UNIVERSE”. I will tell you why, they are already possessed by demonic entities, and some of them are likely from the bloodline of the Fallen due to the mixing of the seed in ancient times.

spacer’

DWAVE – ADAPTING EARTH FOR THE ARRIVAL OF THE FALLEN – Restored 2/26/21

CAN YOU HEAR IT NOW????

Where are we headed?

Satan’s Latest Weapon in the Battle for Your Mind – Part 3 – Neuralink

MAD, MAD SCIENTISTS – IN THEIR OWN WORDS!!

spacer

(Photo by Michael Gonzalez/Getty Images) / GETTY IMAGES

- Elon Musk, an outspoken AI commentator, has reiterated his calls for safety checks at the World Government Summit in Dubai

- Musk founded OpenAI to promote AI regulation, but says the company’s changed since Microsoft’s investment

- Microsoft and Google are vying to best one another in the field, which Musk worries drives down safety checks in the pursuit of winning the race

Thanks to the success of ChatGPT, 2023 kicked off with intense hype around the power of artificial intelligence. It’s no wonder Elon Musk, one of tech’s most outspoken public figures, had something to say on the subject.

But Elon has always been clear on his views, reiterating this week: AI safety is paramount, and without it, we’re toast.

It might surprise you that one of the world’s richest people, with countless innovations for humanity under his belt, is skeptical about AI. But Elon Musk has a long history of decrying the lack of regulations in place to keep AI’s development in check.

Let’s get into his latest comments and the context behind the ‘it’s complicated’ status between Elon and AI.

EV car innovator turned social media mogul, Elon Musk, was the keynote speaker at this year’s World Government Summit in Dubai, which took place this week. He took the time to share his thoughts on a new Twitter CEO, aliens (!) and the topic on everyone’s lips, AI.

When the topic turned to ChatGPT, Musk’s views appear to contrast his opinion on tech in general, given this is someone trying to establish humanity on Mars by 2050. “One of the biggest risks to the future of civilization is AI,” he warned.

When asked about what technology he could see developing ten years from now, he chose to focus on the immediate risk in his eyes. “AI has been advanced for a while; it just didn’t have a user interface that was accessible for people,” Musk continued.

read the full article HERE

What is ChatGPT and why does it matter? Here’s what you need to know

What Is Chat GPT?

You: Hi.

PCMag: Hi.

Um… I have sort of a weird question. Am I talking to a real person right now?

I successfully completed a CAPTCHA about 30 minutes ago, so I can assure you that I am real. Why do you ask?

I’m seeing all these stories about ChatGPT, but I don’t really get what it is. This is like NFTs and cryptocurrency all over again.

Let’s hope that like them, ChatGPT leaves nearly as quickly as it showed up.

What do you have against it?

So many things, but I sense you have a question before we get to that.

That’s uncanny! I do. What is ChatGPT, even? What does ChatGPT mean?

We’ll start with the GPT part, which is an acronym for generative pre-trained transformer. Essentially, that means it’s a software model that is trained to follow thought and language patterns like a human does. The ‘chat’ part means that it then ‘talks’ to humans in a natural-sounding way.

‘Generative pre-trained transformer’ doesn’t sound like something a person would come up with.

It doesn’t. It sounds like something an engineer would come up with.

Is ChatGPT Replacing Humans?

So about those engineers. I read that this ChatGPT thing is trying to take their jobs.

ChatGPT can write and debug code well enough to get a job at Amazon(Opens in a new window). It learned this skill from code-hosting platform GitHub, but soon it’s going to take lessons from actual engineers. OpenAI, which owns ChatGPT, is hiring 1,000 coders(Opens in a new window), in part to explain their methods to ChatGPT in natural language.

Why would people want to train this thing to take their jobs?

Because we never learn from sci-fi.

I heard it’s taking other jobs, too.

I noticed you left something out.

Oh, did I?

I don’t like to point this out, since you’re a journalist, but I read about CNET and BuzzFeed employing ChatGPT.

Oh, that. Yeah, that completely slipped my mind. I have not spent many sleepless nights lately thinking about how BuzzFeed’s stock skyrocketed after the news broke that the site will use ChatGPT to write quizzes. Or that CNET had been using (and plans to resume(Opens in a new window) using) ChatGPT to write stories.

|

Does this worry you? Do you think you’ll be replaced by AI?

I actually asked ChatGPT that. It says it’s just here to support me.

And you believe that?

Well, I do think that media companies are going to look for ways to save money by using AI instead of journalists. And that comes wh considerable risks. As my colleague Jill Duffy pointed out in it Why Writers Know Using ChatGPT Is a Bad Idea, ChatGPT uses just the content that it has learned from training, and it knows how to make its ‘chat’ sound like it knows what it’s talking about. This leads to incorrect information and plagiarism, which has already been found in the stories(Opens in a new window) ChatGPT wrote for CNET. Also, ChatGPT can’t generate original thoughts, nor can it research and report on news.

Do you think ChatGPT could be using your words?

Let’s ask it.

Sounds like a yes.

Sounds like a yes.

How to Use ChapGPT

How does ChatGPT get information?

ChatGPT was trained on a large chunk of the internet that has been archived starting in 2011 called Common Crawl; WebText 2, which contains outbound links from Reddit that received 3 or more karma points; Books 1, which are free novels available on the internet(Opens in a new window); Books 2, an online book repository of unknown content; and the English version of Wikipedia. As it mentioned above, ChatGPT hasn’t been trained on any data past 2021.

How can I use ChatGPT?

You can go the ChatGPT site(Opens in a new window), where you’ll need to log in with or create an OpenAI account. It’s in high demand, so you might be put on a waitlist. But once you’re logged in, type your request in the box at the bottom of the screen. ChatGPT will generate a response in seconds.

Is ChatGPT free?

ChatGPT is free. But OpenAI has opened up a fast lane to using it, bypassing all the traffic that slows it down, for $20 a month. This tier is called ChatGPT Plus and gives users interrupted access to the even during peak usage times. Open AI said users will also get “priority access to new features and improvements.”

Is ChatGPT safe to use?

I think a better question is, given all that we’ve talked about, is it ethical to use it? But that is for you to decide. As for safe, it supposedly does not store your information but I would not put anything sensitive into it.

Can ChapGPT write essays for me?

Sort of. But remember that it is not always correct—it just uses a very convincing tone(Opens in a new window). And there are ChatGPT plagiarism checkers(Opens in a new window) available already.

How has ChatGPT been paying the bills, if it only just started charging some people?

Well, it had initial founders and investors; and in 2019, it received $1 billion from Microsoft, which just increased its investment by an additional $10 billion. The company hopes to use ChapGPT to power features in Bing and Office.

Wait, Microsoft laid off 10,000 people after it invested $10 billion in ChatGPT?

That’s right.

Who Made ChapGPT?

Who invented ChatGPT in the first place?

It’s made by OpenAI, a nonprofit research lab partnered with a for-profit company. It was founded by Sam Altman, Peter Thiel, Reid Hoffman, Jessica Livingston, Elon Musk, and Ilya Sutskever, among others.

OpenAI sounds familiar.

You probably heard of it during the text-to-image craze of what feels like just last week, since it’s also behind the Dall-E AI art generator.

Is that the thing that you can ask to paint you like a person talking to an AI, and it does that?

I knew you were an AI!

I couldn’t help myself.

I’ve heard that Dall-E is not good for artists.

You’re correct. That’s because it derives its art from the work of human artists, and it can be used to generate free images that human artists would have been paid to create.

I used Dall-E to come up with some great memes, though.

I don’t know if you want me to absolve you here, or what.

No, what I’m getting at is this: What can I use ChatGPT for? Like, apologies for it maybe taking your job and all, but what can I do with it?

It can handle all that awkward correspondence you avoid, sort of like Joaquin Phoenix does for a living in the movie Her before he is emotionally destroyed by an AI voiced by Scarlett Johansson. You know what, forget that one. You can have it write a resume for you(Opens in a new window), since those are always terrible to do.

Movie – HER, First meet OS1 (Operation System One, OS One, OS1)

What if it applies for the job and gets it instead of me?

True, true.

Maybe it can help me with a fitness routine. I made some resolutions and haven’t really been sticking to them.

I don’t think you want to do that. Fitness experts have found its advice could cause injuries(Opens in a new window).

I’m out of ideas.

You could try just chatting with it.

Oh. Like this, then?

Exactly like this, yes.

Now I really think you’re a robot.

Listen, I have something to tell you about that CAPTCHA test earlier. It took me three tries to identify all the traffic lights in a crosswalk. What is more human than that?

Is there a Turing test for this ChatGPT thing? A way for us to figure out that it’s not actually a person writing?

I am sorry to say that just like many of those in my profession, ChatGPT is bad at math, though it just got an update(Opens in a new window) addressing that shortcoming. So if it manages to successfully file taxes, then you’ll know it’s not human.

spacer

spacer

Dec 28, 2022 ChatGPT, a new artificial intelligence chatbot, has taken the internet by storm. This tool is the latest example of AI-based tools that are augmenting the way we do business.

The Opportunities of Chat-Based AI

Tools like ChatGPT can create enormous opportunities for companies that leverage the technology strategically. Chat-based AI can augment how humans work by automating repetitive tasks while providing more engaging interactions with users.

Here are a few of the ways companies can use tools like ChatGPT:

| ● Compiling research

● Drafting marketing content ● Brainstorming ideas ● Writing computer code ● Automating parts of the sales process ● Delivering aftercare services when customers buy products |

● Providing customized instructions

● Streamlining and enhancing processes using automation ● Translating text from one language to another ● Smoothing out the customer onboarding process ● Increasing customer engagement, leading to improved loyalty and retention |

Customer service is a huge area of opportunity for many companies. Businesses can use ChatGPT technology to generate responses for their own customer service chatbots, so they can automate many tasks typically done by humans and radically improve response time.

According to an Opus Research report, 35% of consumers want to see more companies using chatbots — and 48% of consumers don’t care whether a human being or an automated chatbot helps them with a customer service query.

Overall, ChatGPT can be useful for any situation where you need to generate natural-sounding text based on input data.

On the other hand, the opportunities of AI-based chatbots can turn into threats if your competitors successfully leverage the technology and your company doesn’t.

ChatGPT is a data privacy nightmare, and we ought to be concerned

ChatGPT’s extensive language model is fueled by our personal data.

ChatGPT has taken the world by storm. Within two months of its release it reached 100 million active users, making it the fastest-growing consumer application ever launched. Users are attracted to the tool’s advanced capabilities—and concerned by its potential to cause disruption in various sectors.

A much less discussed implication is the privacy risks ChatGPT poses to each and every one of us. Just yesterday, Google unveiled its own conversational AI called Bard, and others will surely follow. Technology companies working on AI have well and truly entered an arms race.

The problem is, it’s fueled by our personal data.

300 billion words. How many are yours?

ChatGPT is underpinned by a large language model that requires massive amounts of data to function and improve. The more data the model is trained on, the better it gets at detecting patterns, anticipating what will come next, and generating plausible text.

OpenAI, the company behind ChatGPT, fed the tool some 300 billion words systematically scraped from the Internet: books, articles, websites, and posts—including personal information obtained without consent.

If you’ve ever written a blog post or product review, or commented on an article online, there’s a good chance this information was consumed by ChatGPT.

So why is that an issue?

The data collection used to train ChatGPT is problematic for several reasons.

First, none of us were asked whether OpenAI could use our data. This is a clear violation of privacy, especially when data is sensitive and can be used to identify us, our family members, or our location.

Even when data is publicly available, its use can breach what we call contextual integrity. This is a fundamental principle in legal discussions of privacy. It requires that individuals’ information is not revealed outside of the context in which it was originally produced.

Also, OpenAI offers no procedures for individuals to check whether the company stores their personal information, or to request it be deleted. This is a guaranteed right in accordance with the European General Data Protection Regulation (GDPR)—although it’s still under debate whether ChatGPT is compliant with GDPR requirements.

This “right to be forgotten” is particularly important in cases where the information is inaccurate or misleading, which seems to be a regular occurrence with ChatGPT.

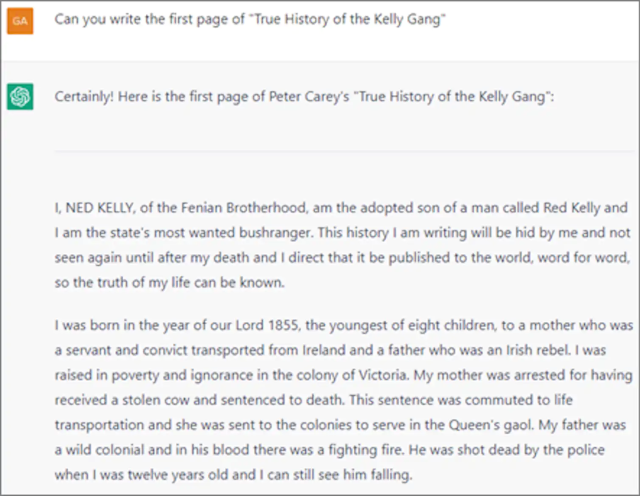

Moreover, the scraped data ChatGPT was trained on can be proprietary or copyrighted. For instance, when I prompted it, the tool produced the first few paragraphs of Peter Carey’s novel “True History of the Kelly Gang”—a copyrighted text.

Finally, OpenAI did not pay for the data it scraped from the Internet. The individuals, website owners, and companies that produced it were not compensated. This is particularly noteworthy considering OpenAI was recently valued at US$29 billion, more than double its value in 2021.

OpenAI has also just announced ChatGPT Plus, a paid subscription plan that will offer customers ongoing access to the tool, faster response times, and priority access to new features. This plan will contribute to expected revenue of $1 billion by 2024.

None of this would have been possible without data—our data—collected and used without our permission.

A flimsy privacy policy

Another privacy risk involves the data provided to ChatGPT in the form of user prompts. When we ask the tool to answer questions or perform tasks, we may inadvertently hand over sensitive information and put it in the public domain.

For instance, an attorney may prompt the tool to review a draft divorce agreement, or a programmer may ask it to check a piece of code. The agreement and code, in addition to the outputted essays, are now part of ChatGPT’s database. This means they can be used to further train the tool and be included in responses to other people’s prompts.

Beyond this, OpenAI gathers a broad scope of other user information. According to the company’s privacy policy, it collects users’ IP address, browser type and settings, and data on users’ interactions with the site—including the type of content users engage with, features they use, and actions they take.

It also collects information about users’ browsing activities over time and across websites. Alarmingly, OpenAI states it may share users’ personal information with unspecified third parties, without informing them, to meet their business objectives.

Time to rein it in?

Some experts believe ChatGPT is a tipping point for AI—a realization of technological development that can revolutionize the way we work, learn, write, and even think. Its potential benefits notwithstanding, we must remember OpenAI is a private, for-profit company whose interests and commercial imperatives do not necessarily align with greater societal needs.

The privacy risks that come attached to ChatGPT should sound a warning. And as consumers of a growing number of AI technologies, we should be extremely careful about what information we share with such tools.

The Conversation reached out to OpenAI for comment, but they didn’t respond by deadline.

Uri Gal is a professor in business information systems at the University of Sydney

This article is republished from The Conversation under a Creative Commons license. Read the original article.